With Solr now being the default and recommended search provider in Sitecore 9 for all setups except XM1 (and required for xConnect) I wanted to look at how to run Solr with Docker.

My requirements/reasons for wanting to run Solr in a Docker container:

- Not having to install Java (nobody wants that these days)

- Easy install of Solr "as a service" (I can't be the only one thinking it's a bit of a hassle to setup on windows, right?)

- Run Solr only when needed

- Run different versions of Solr (or quickly switch between versions)

During my research and experiments I got to thinking about SQL Server as well. It is not too long ago that it started being supported on Linux and I found out that Microsoft have made some SQL Server 2017 docker images - so why not try and get Sitecore running with that as well?

Overview

I will go through the steps for configuring the different containers and getting Sitecore 9 XP0 installed using the Sitecore Installation Framework (SIF) with both SQL Server 2017 and Solr 6.6.2 running in containers in Docker.

Prepare yourself, this is a long one...

- Why I chose Linux containers

- Prerequisites

- Setting up the containers

- 1.1 Building a custom image for the nginx HTTPS proxy

- Setting up the containers

- Running the containers and importing the created certificate

- Modify SIF config files

- Create and run install script with SIF-less

- Continue with the post-installation steps in Sitecore installation guide

- Closing notes

- Troubleshooting

- Additional resources

I've made the files needed for the Docker setup available on GitHub.

Why I chose Linux containers

Docker (on Windows 10) can run either Linux containers or Windows containers - but not both at the same time. I could have gone with Windows containers, which would have enabled me to also run IIS and potentially the xConnect service in containers as well, but I decided against that for several reasons.

There are more Linux images available and they just have a bigger community compared to Windows images as they have only been reasonably supported by Docker somewhat recently. I also think it's easier to create custom images in Linux using apt-get or apk add (and I know almost nothing about Linux) compared to windows where you usually download a file from a specific URL, unzip etc. all using the command line. Windows is just better suited for a GUI and it's just all a bit cumbersome.

Also, the Sitecore Installation Framework (SIF) tinkers with your local machine with regards to IIS websites, application pools and so on, so I would have had to customize that as well if I wanted to use SIF for the installation.

Prerequisites

- Download and install Sitecore Installation Framework and Sitecore Fundamentals (they are listed on the Sitecore 9 download page)

- Download the Sitecore 9.0 XP Single (XP0) package or which ever package is relevant for you.

- Download and install Docker

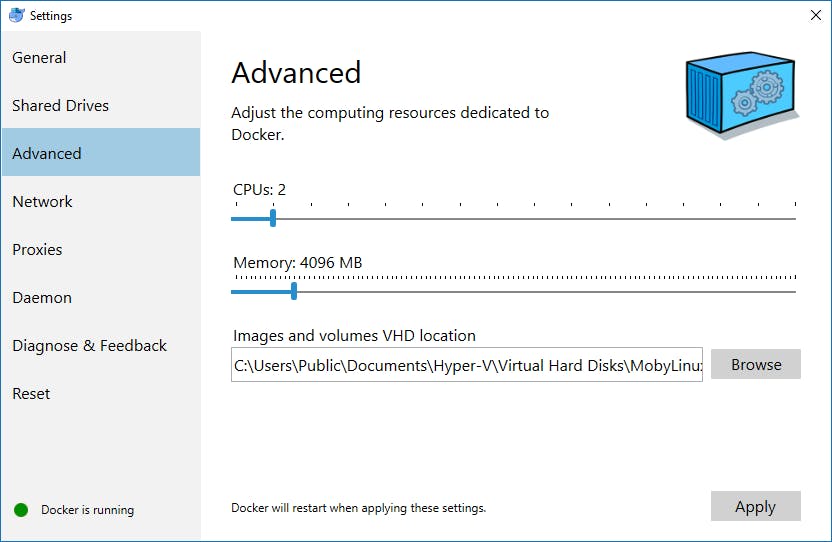

You will need to configure Docker to have at least 3.25 GB memory available (required for SQL Server). Right click the tray icon and go to Settings. Go to the Advanced tab and adjust the slider for the available memory.

1. Setting up the containers

For my current setup we need 3 services running in Docker:

- Solr

- SQL Server

- nginx (proxying HTTPS for Solr)

Instead of the nginx proxy you could also create a custom image where you configure SSL for Solr, but I found this solution - using nginx - easier to setup.

To set all this up we need to create a few files. We start by composing our services for the docker-compose command with a docker-compose.yml file.

docker-compose.yml

version: '3'

services:

sql2017:

image: microsoft/mssql-server-linux:2017-latest

ports:

- "1433:1433"

volumes:

- ./Sqldata:/var/opt/mssql

environment:

ACCEPT_EULA: "Y"

SA_PASSWORD: Qwerty123

solr662:

image: solr:6.6.2

ports:

- "8983" # Only exposes the port to other services

volumes:

- ./Solrdata:/solrhome

environment:

SOLR_HOME: /solrhome

INIT_SOLR_HOME: "yes"

proxy:

build:

context: .

args:

SERVER_NAME: solr

image: nginx-solr-proxy

ports:

- "8983:443"

volumes:

- ./Certs:/certs

environment:

SERVER_NAME: solr

PROXY_PASS: http://solr662:8983

PROXY_REDIRECT: http://solr662/solr/ https://solr:8983/solr/

depends_on:

- solr662

What all this does is configure 3 services: sql2017, solr662 and proxy. Each services specifies an image (proxy actually builds its own, more on that in a bit), exposes some ports, mounts some folders so we can persist data and configures some relevant environment variables.

- sql2017

- Use the official

microsoft/mssql-server-linuximage, tag2017-latest - Bind port

1433on host machine to port1433 - Mount the folder

./Sqldatato where the databases are stored inside the container

- Use the official

- solr662

- Use the official

solrimage, tag6.6.2 - Expose port

8983only to the other services - Mount the folder

./Solrdatato the Solr home folder inside the container

- Use the official

- proxy

- Build a new image (uses the

Dockerfilefile as explained later) with namenginx-solr-proxyand use this image - Bind port

8983on host machine to port443 - Mount the folder

./Certs. This folder will contain the SSL certificate used by the proxy. This will need to be installed into your root certificate store to be trusted (Chrome will start complain because it's not correctly singed by a CA, but SIF will work).

- Build a new image (uses the

NOTE: This setup requires you to add 127.0.0.1 solr to your hosts file. If you want to use another hostname just change the value of the SERVER_NAME variable both under build: and environment: and change the hostname of the last part of the PROXY_REDIRECT environment variable to match, e.g. PROXY_REDIRECT: http://solr662/solr/ https://YOUR_CUSTOM_HOSTNAME:8983/solr/.

Later on, when the services are up and running, you will have to install the snakeoil.cer certificate located in the mounted ./Certs folder.

1.1 Building a custom image for the nginx HTTPS proxy

For the nginx proxy we need to customize the barebone nginx image to include a newly created SSL certificate and configure nginx to our needs. This can be done in many ways and my way probably isn't the best way (it can definitely be improved), but it works pretty in my opinion. In any case it's a good starting point.

To do this we need three files:

- Dockerfile - specifies how to build the image

- docker-entrypoint.sh - run each time the image is run

- .dockerignore - ignores files not needed by Dockerfile

Dockerfile

FROM nginx:mainline-alpine

ARG SERVER_NAME=solr

ENV SSL_CERTIFICATE=/etc/ssl/certs/snakeoil.cer

ENV SSL_CERTIFICATE_KEY=/etc/ssl/private/snakeoil.key

RUN apk add --no-cache openssl \

&& openssl ecparam -out ${SSL_CERTIFICATE_KEY} -name prime256v1 -genkey \

&& openssl req -new -key ${SSL_CERTIFICATE_KEY} -x509 -sha256 -nodes \

-days 3650 -subj "/CN=${SERVER_NAME}" -out ${SSL_CERTIFICATE}

RUN rm /etc/nginx/conf.d/*.conf

COPY . /

ENTRYPOINT ["/docker-entrypoint.sh"]

.dockerignore

**/*

!docker-entrypoint.sh

What this does is pull the official nginx image with the tag mainline-alpine (the alpine version has a smaller footprint) and uses this as the baseline for our new image.

Then we are creating the certificate and key with the specified SERVER_NAME (as specified in docker-compose.yml or defaulting to solr) as the subject of the certificate.

Then we clear out the default nginx config files, copy everything in the current directory (that is not ignored by .dockerignore, i.e. only the docker-entrypoint.sh is copied).

At last we specify the script to run on startup, docker-entrypoint.sh.

docker-entrypoint.sh

#!/bin/sh

mkdir /certs -p

cp /etc/ssl/certs/snakeoil.cer /certs/

cp /etc/ssl/private/snakeoil.key /certs/

cat > /etc/nginx/conf.d/proxy.conf << EOT

map \$http_upgrade \$connection_upgrade {

default upgrade;

'' close;

}

server {

listen 443 ssl default;

server_name ${SERVER_NAME:-_};

ssl_certificate /etc/ssl/certs/snakeoil.cer;

ssl_certificate_key /etc/ssl/private/snakeoil.key;

location / {

client_body_buffer_size ${CLIENT_BODY_BUFFER_SIZE:-128k};

client_max_body_size ${CLIENT_MAX_BODY_SIZE:-16m};

proxy_set_header Host ${PROXY_HOST:-\$host};

proxy_set_header X-Forwarded-Proto \$scheme;

proxy_set_header X-Forwarded-Port \$server_port;

proxy_set_header X-Forwarded-For \$proxy_add_x_forwarded_for;

proxy_pass ${PROXY_PASS:-http://upstream};

proxy_redirect ${PROXY_REDIRECT:-default};

proxy_http_version 1.1;

proxy_set_header Upgrade \$http_upgrade;

proxy_set_header Connection \$connection_upgrade;

proxy_buffering ${PROXY_BUFFERING:-off};

proxy_connect_timeout ${PROXY_CONNECT_TIMEOUT:-60s};

proxy_read_timeout ${PROXY_READ_TIMEOUT:-180s};

proxy_send_timeout ${PROXY_SEND_TIMEOUT:-60s};

}

}

server {

listen 80 default;

server_name ${SERVER_NAME:-_};

return 301 https://\$server_name\$request_uri;

}

EOT

echo "Starting nginx"

exec nginx -g 'daemon off;'

NOTE: Make sure this file is saved with unix-style line endings

LFand not Windows-styleCRLF

The entrypoint script just configures nginx and copies the certificate to the certs folder so you can easily access it and import it into your root certificate store.

2. Running the containers and importing the created certificate

To build our custom image and run the services we configured in docker-compose.yml simply run the following command in the same folder as your docker-compose.yml, Dockerfile etc.

docker-compose up -d

If you leave out the

-dparameter it will attach to the containers and show log output.

It should result in something like this:

Building proxy

Step 1/8 : FROM nginx:mainline-alpine

---> 5c6da346e3d6

Step 2/8 : ARG SERVER_NAME=solr

---> Using cache

---> 83967fbb9479

Step 3/8 : ENV SSL_CERTIFICATE /etc/ssl/certs/snakeoil.cer

---> Using cache

---> 82b6dfcb6d13

Step 4/8 : ENV SSL_CERTIFICATE_KEY /etc/ssl/private/snakeoil.key

---> Using cache

---> 5e89eeaa6573

Step 5/8 : RUN apk add --no-cache openssl && openssl ecparam -out ${SSL_CERTIFICATE_KEY} -name prime256v1 -genkey && openssl req -new -key ${SSL_CERTIFICATE_KEY} -x509 -sha256 -nodes -days 3650 -subj "/CN=${SERVER_NAME}" -out ${SSL_CERTIFICATE}

---> Using cache

---> b6e33bf3b7e4

Step 6/8 : RUN rm /etc/nginx/conf.d/*.conf

---> Using cache

---> 8def646dfe62

Step 7/8 : COPY . /

---> Using cache

---> 2ca0fb012838

Step 8/8 : ENTRYPOINT /docker-entrypoint.sh

---> Using cache

---> 66b5af8fe6fd

Successfully built 66b5af8fe6fd

Successfully tagged nginx-solr-proxy:latest

Creating docker_solr662_1 ...

Creating docker_sql2017_1 ...

Creating docker_sql2017_1

Creating docker_solr662_1 ... done

Creating docker_proxy_1 ...

Creating docker_sql2017_1 ... done

The first part of the container name will use the name of the current folder, docker in my case.

You should now see three new folders:

SqldataSolrdataCerts

Import the certificate snakeoil.cer from the Certs folder into your root certificates store. Without this the Sitecore Installation Framework (SIF) will not trust the server and be unable to create the Solr cores etc. as part of the installation.

3. Modify SIF config files

As we don't have a Solr service installed we need to modify the SIF config files. We have to remove the StopSolr and StartSolr tasks from the following config files:

xconnect-solr.jsonsitecore-solr.json

They are logically located in the Tasks section. You can comment them out or delete them completely. Here is an example from the xconnect-solr.json file:

// ...

"Tasks": {

// Tasks are separate units of work in a configuration.

// Each task is an action that will be completed when Install-SitecoreConfiguration is called.

// By default, tasks are applied in the order they are declared.

// Tasks may reference Parameters, Variables, and config functions.

// "StopSolr": {

// // Stops the Solr service if it is running.

// "Type": "ManageService",

// "Params": {

// "Name": "[parameter('SolrService')]",

// "Status": "Stopped",

// "PostDelay": 1000

// }

// },

// ...

}

// ...

Because we only mounted the Solr home folder instead of the whole Solr installation directory we also have to change the Solr.Server variable to be [variable('Solr.FullRoot')] in the files mentioned above. It should look like below.

// ...

"Solr.Server": "[variable('Solr.FullRoot')]",

// ...

4. Create and run install script with SIF-less

Rob Ahnemann has created this great tool called SIF-less to make it easier to specify the parameters for a SIF install and also to check that everything is setup correctly beforehand.

Check out his initial blog post and the follow up. SIF-less can be downloaded from his Bitbucket repository or from this direct link.

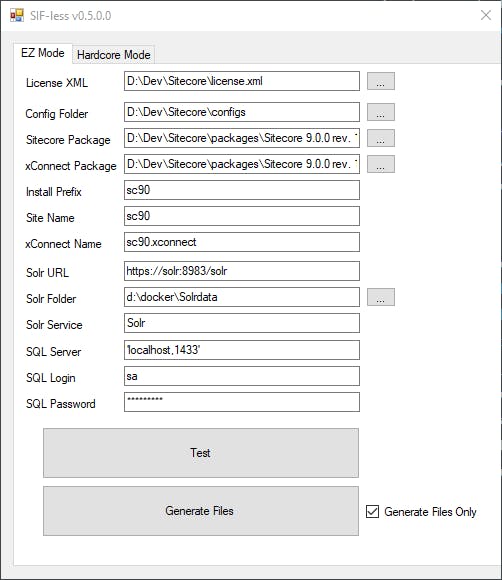

Above are the settings I used. A few notes to be aware of:

- Use 'localhost,1433' as SQL Server (including the ´'´s) otherwise the PowerShell script will not parse it as two separate values and fail

- Point **Solr Folder** to the mounted `Solrdata` folder

- **Solr Service** can be ignored (might fail if empty, I haven't tried)

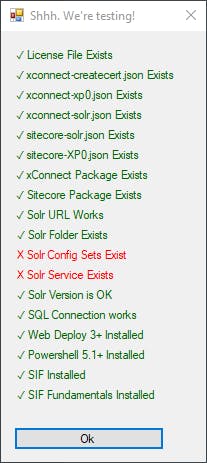

All tests except Solr Config Sets Exists and Solr Service Exists should be green.

Click the Generate Files button and run the generated .ps1 file (something like SIFless-EZ-1511282466.ps1) to begin the installation.

The installation should hopefully complete without any issues, if not let me know in the comments.

5. Continue with the post-installation steps in Sitecore installation guide

You should finish off your install by following the post-installation steps in the installation guide, starting in chapter 6.

It's mostly the default stuff of rebuilding Search Indexes and the Link Database, deploying Marketing Definitions etc., but step 6.1 requires you to add a recognized user to the xDB Shard Databases.

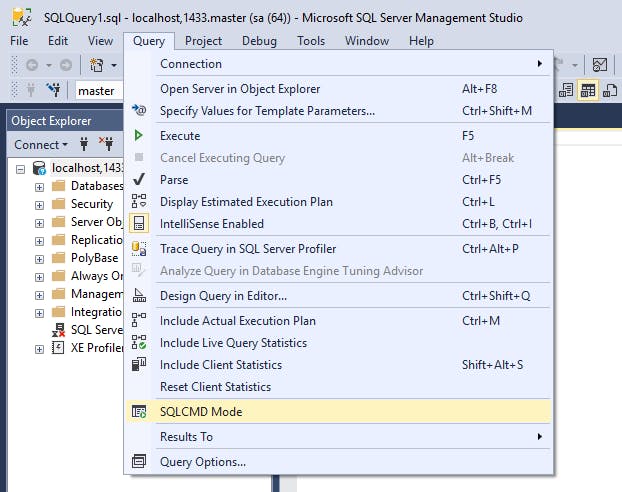

They have included a nifty script to help you with that. Just change the DatabasePrefix to match the prefix you used for your installation. You also have to turn on SQLCMD Mode from the Query menu to be able to successfully run the script.

:SETVAR DatabasePrefix sc90

:SETVAR UserName collectionuser

:SETVAR Password Test12345

:SETVAR ShardMapManagerDatabaseNameSuffix _Xdb.Collection.ShardMapManager

:SETVAR Shard0DatabaseNameSuffix _Xdb.Collection.Shard0

:SETVAR Shard1DatabaseNameSuffix _Xdb.Collection.Shard1

GO

IF(SUSER_ID('$(UserName)') IS NULL)

BEGIN

CREATE LOGIN [$(UserName)] WITH PASSWORD = '$(Password)';

END;

GO

USE [$(DatabasePrefix)$(ShardMapManagerDatabaseNameSuffix)]

IF NOT EXISTS (SELECT * FROM sys.database_principals WHERE name = N'$(UserName)')

BEGIN

CREATE USER [$(UserName)] FOR LOGIN [$(UserName)]

GRANT SELECT ON SCHEMA :: __ShardManagement TO [$(UserName)]

GRANT EXECUTE ON SCHEMA :: __ShardManagement TO [$(UserName)]

END;

GO

USE [$(DatabasePrefix)$(Shard0DatabaseNameSuffix)]

IF NOT EXISTS (SELECT * FROM sys.database_principals WHERE name = N'$(UserName)')

BEGIN

CREATE USER [$(UserName)] FOR LOGIN [$(UserName)]

EXEC [xdb_collection].[GrantLeastPrivilege] @UserName = '$(UserName)'

END;

GO

USE [$(DatabasePrefix)$(Shard1DatabaseNameSuffix)]

IF NOT EXISTS (SELECT * FROM sys.database_principals WHERE name = N'$(UserName)')

BEGIN

CREATE USER [$(UserName)] FOR LOGIN [$(UserName)]

EXEC [xdb_collection].[GrantLeastPrivilege] @UserName = '$(UserName)'

END;

GO

Closing notes

This ended up being quite a long blog post. It took me quite some time to put all the pieces together to get everything running, but a lot of that was because I know almost nothing about either Docker nor Linux.

In the end I think the required setup/files are actually pretty simple though, considering what is actually being done. All the files can be found on GitHub.

One thing I specifically would like to improve on in the future is the whole SSL certificate setup, which still requires some manual steps and is not fully trusted in the browser.

I hope you enjoyed it all and got it working! If you have any comments, questions or suggestions for improvements please let me know in the comments section.

Troubleshooting

Getting "exec user process caused "no such file or directory"" when starting nginx container

Make sure the docker-entrypoint.sh file is saved with unix-style line endings LF and not Windows-style CRLF.

forums.docker.com/t/standard-init-linux-go-..